- NextWave AI

- Posts

- AI and the Brain: Similar in Scale, Different in Design

AI and the Brain: Similar in Scale, Different in Design

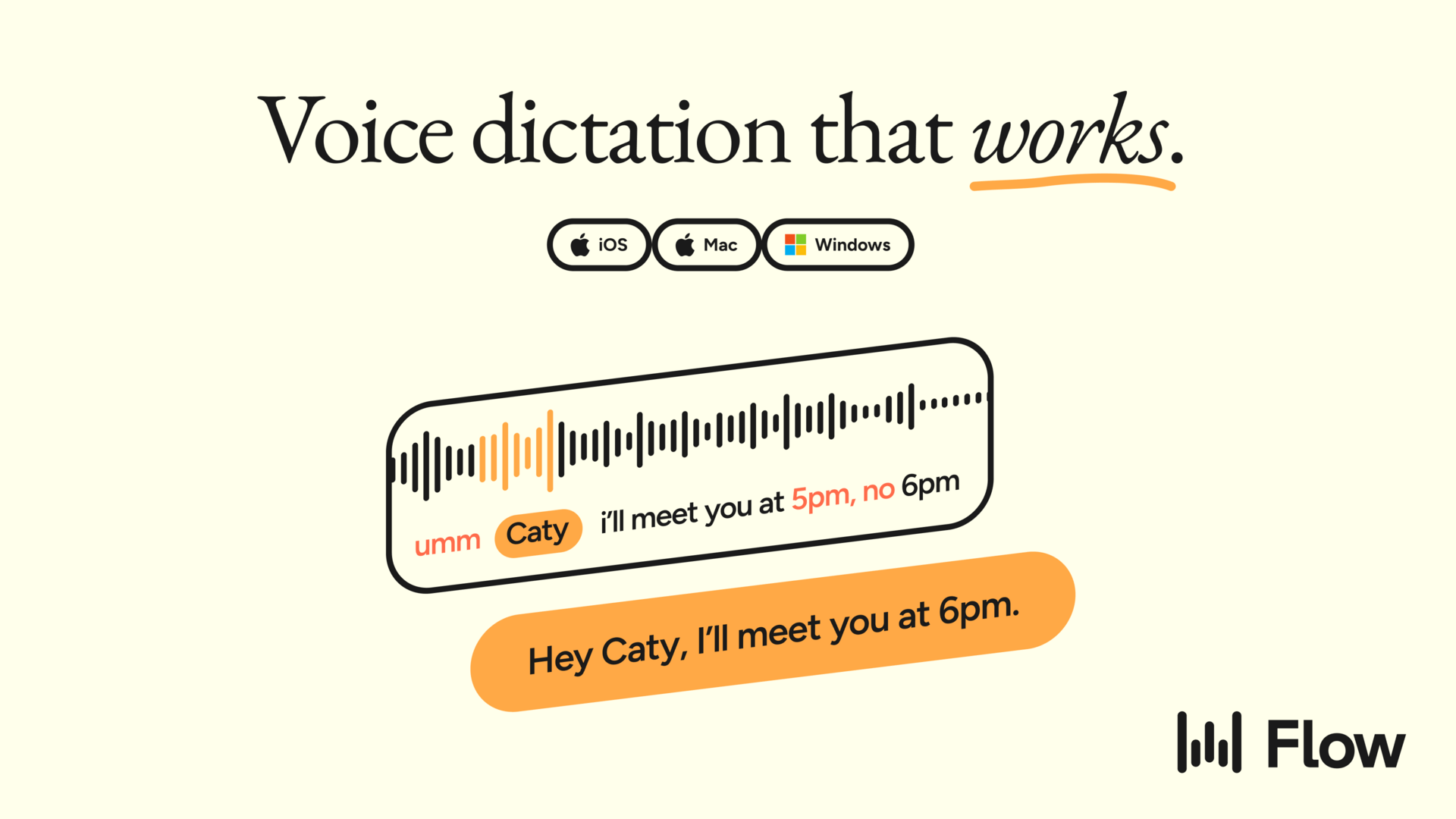

Speak fuller prompts. Get better answers.

Stop losing nuance when you type prompts. Wispr Flow captures your spoken reasoning, removes filler, and formats it into a clear prompt that keeps examples, constraints, and tone intact. Drop that prompt into your AI tool and get fewer follow-up prompts and cleaner results. Works across your apps on Mac, Windows, and iPhone. Try Wispr Flow for AI to upgrade your inputs and save time.

Artificial Intelligence (AI) has grown at an extraordinary pace over the past few years. Not long ago, Large Language Models (LLMs) such as ChatGPT, Gemini, and Claude were largely experimental systems—interesting, sometimes impressive, but often inconsistent. Users could easily trick them into contradictions or expose gaps in their reasoning. Today, however, these systems have evolved into sophisticated tools capable of writing software, assisting scientific research, analyzing massive document collections, and offering structured insights across disciplines. Modern multimodal AI systems no longer rely solely on text; they can interpret images, process audio, generate video, and integrate these streams into unified outputs. Capabilities such as language understanding, reasoning, and even creativity—once considered uniquely human—now appear, at least superficially, in machines.

Despite this rapid transformation, the foundational ideas behind today’s AI systems are not entirely new. Artificial neural networks date back to the late twentieth century, and their conceptual roots stretch even further. In 1943, Warren McCulloch and Walter Pitts introduced a mathematical model of a biological neuron. Known as the McCulloch–Pitts neuron, this model described a simplified computational unit that takes numerical inputs, multiplies them by adjustable weights, sums the results, and passes the total through a non-linear function to produce an output. Though basic, this abstraction captured the essential idea that complex behavior could emerge from networks of simple units.

Individually, such artificial neurons are extremely limited. Yet when connected in large networks, they become powerful pattern-recognition systems. A crucial mathematical insight, known as the universal approximation theorem, demonstrates that networks composed of enough such units can approximate virtually any function that maps inputs to outputs. In principle, given sufficient size and training data, these networks can model highly complex relationships. This theorem laid the theoretical groundwork for the deep learning revolution that now powers modern AI.

As AI systems scaled up in size—both in terms of parameters and training data—their capabilities expanded dramatically. Models like GPT-4 introduced architectural innovations such as mixture-of-experts (MoE) frameworks. Instead of activating the entire neural network for every task, MoE systems selectively engage specialized sub-networks depending on the input. This approach improves efficiency and allows the system to handle diverse tasks without overwhelming computational costs. Interestingly, this selective activation resembles how the human brain recruits different regions for different functions. When solving a mathematical problem, certain neural circuits become active; when listening to music, others dominate. Both systems exhibit specialization and selective engagement.

However, similarities in scale and selective activation should not obscure the profound differences in design between artificial neural networks and biological brains. The human brain is the product of millions of years of evolution. It operates through complex electrochemical processes, with approximately 86 billion neurons interconnected by trillions of synapses. These connections are dynamic, constantly reshaped by experience, emotion, and context. Learning in the brain is not merely about adjusting numerical weights; it involves biochemical signaling, structural plasticity, and feedback loops that integrate perception, memory, and action.

Artificial neural networks, by contrast, rely on mathematical optimization. They learn by adjusting weights through algorithms such as gradient descent, guided by massive datasets and objective functions. Their “understanding” is statistical rather than experiential. While they can simulate conversation or reasoning, they do not possess consciousness, emotions, or subjective awareness. Their outputs emerge from pattern recognition across vast corpora of data, not from lived experience.

Energy efficiency provides another stark contrast. The human brain operates on roughly 20 watts of power—less than many household light bulbs—while performing tasks that AI systems struggle to match. Training large models requires enormous computational resources, specialized hardware, and significant energy consumption. Even inference—generating responses in real time—demands data centers with substantial power requirements. In this sense, the brain remains vastly more efficient than current AI systems.

Furthermore, the brain integrates multiple modalities seamlessly from birth. Humans learn language alongside sensory experiences, motor actions, and social interactions. This embodied learning shapes cognition in ways that purely data-driven systems cannot replicate. Multimodal AI models attempt to bridge this gap by training on text, images, audio, and video simultaneously. Yet their integration remains fundamentally different from the deeply embodied, context-rich learning of humans.

It is tempting to draw direct parallels between AI networks and the brain, especially as model sizes approach billions or even trillions of parameters. But scale alone does not determine equivalence. The architecture, learning mechanisms, and underlying substrates differ profoundly. Artificial neural networks are engineered systems optimized for specific objectives; the brain is a biological organ shaped by survival pressures, capable of self-awareness and adaptive reasoning in unpredictable environments.

Nevertheless, the comparison is not without value. Neuroscience has inspired AI architectures, and AI tools now assist neuroscientists in analyzing brain data. The exchange is bidirectional: insights into biological intelligence inform machine learning, while computational models provide frameworks for testing hypotheses about cognition. Rather than viewing AI as a replica of the brain, it may be more accurate to see it as a parallel form of intelligence—one that shares certain abstract principles but diverges fundamentally in implementation.

As AI continues to advance, understanding these similarities and differences becomes increasingly important. Appreciating the distinctions helps temper exaggerated claims about machine consciousness while recognizing the genuine progress in computational capability. AI and the brain may converge in scale, but they remain distinct in design, origin, and essence. The future will likely see deeper collaboration between neuroscience and artificial intelligence, not to replace the human mind, but to better understand it—and perhaps to build tools that extend its reach.